ChatterBaby

Initial evidence from research studies

App Summary

App Screenshots

Detailed Description

Functionality & Mechanism

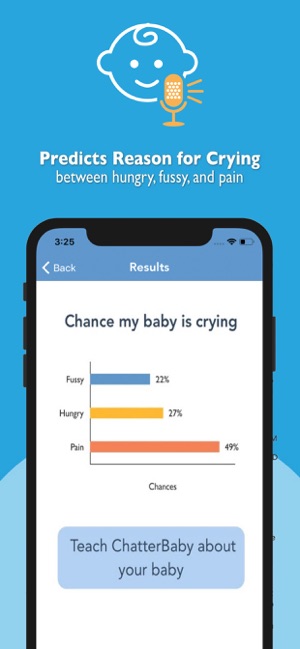

ChatterBaby is a cry-translation tool that leverages a machine learning algorithm to analyze infant vocalizations. Users initiate a session by recording a short audio sample of an infant's cry using a mobile device. The system processes the recording, comparing its acoustic features to a database of over 1,500 validated sounds. The interface then presents a probabilistic classification of the cry's likely cause, categorized as hunger, fussiness, or pain, to inform caregiver response.

Evidence & Research Context

- A validation study demonstrated the cry-translation algorithm's 90.7% accuracy in identifying pain cries and 71.5% accuracy in discriminating among pain, hunger, and fussiness states.

- The associated research indicates that colic cries exhibit acoustic features highly similar to pain cries, with the algorithm assigning colic cries a 73% average pain rating (p < 0.001).

- The system's algorithm is grounded in research identifying optimal machine learning methods for clustering infant vocalizations based on over 6,000 distinct acoustic features.

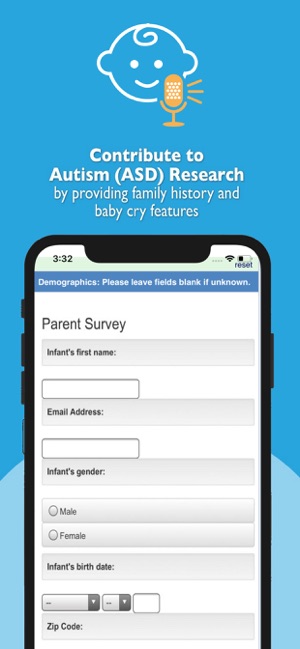

- User-submitted audio data contributes to a HIPAA-compliant research database for studies investigating links between infant vocalization patterns and neurodevelopmental outcomes.

Intended Use & Scope

This tool is intended for parents and caregivers as a supplemental aid for interpreting an infant's immediate needs. Its primary utility is to provide a preliminary, algorithmic classification of cries. ChatterBaby is not a medical device and cannot diagnose underlying conditions. A caregiver's own judgment should always take precedence, and a healthcare professional must be consulted for any medical concerns.

Studies & Publications

Peer-reviewed research associated with this app.

Applications of unsupervised machine learning in classification: Detecting optimal clustering method for baby cry

Lyu et al. (2025) · UCLA eScholarship

Describes the research-driven development of this appDefining and distinguishing infant behavioral states using acoustic cry analysis: is colic painful?

Parga et al. (2019) · Pediatric Research

The app accurately identified pain cries 90.7% of the time and distinguished pain from fussiness or hunger 71.5% of the time.

In the Media

How to Understand Baby Sounds

UCLA assistant professor Ariana Anderson developed ChatterBaby to help parents interpret infant cries, using machine learning algorithms that analyze acoustic features against a database of more than 20,000 baby sounds. The app correctly flagged more than 90% of pain cries by identifying patterns such as higher-pitched wailing with less silence for babies in pain versus lower-pitched crying with more silence for fussy babies. Originally designed for deaf parents, ChatterBaby launched publicly in 2018 as a free app that predicts whether a baby is hungry, fussy, or in pain.

App developed at UCLA helps users identify the reason behind a baby's cry

UCLA Semel Institute researcher Ariana Anderson developed ChatterBaby to help parents identify why their babies are crying, using artificial intelligence to analyze audio recordings and compare them to a database of cry patterns. Research coordinator Bianca Dang said the app correctly captures crying 90% of the time and explained that "we want both parents, not just the mother, to be able to interact with their baby better and more effectively." The app launched in 2018 after winning first prize in UCLA's 2016 Code for the Mission competition.

ChatterBaby, an app that helps parents know when and why their baby is crying, used in new research

UCLA researchers developed ChatterBaby to help deaf parents recognize and understand their baby's cries by analyzing cry acoustics to identify them as fussy, hungry, or painful using algorithms trained on over 2,000 infant cry samples. The app's algorithms correctly flagged pain cries more than 90% of the time during vaccinations, and new research found that cries from colicky babies had a 73% chance of being characterized as painful, suggesting colic may be related to pain rather than simple fussiness.

ChatterBaby app could help parents figure out why their baby is crying

UCLA statistician Ariana Anderson developed ChatterBaby to help parents interpret their baby's cries by analyzing acoustic features, originally designing the technology to assist deaf parents but expanding it for all new parents. The app analyzes over 6,000 acoustic features from five-second audio samples to determine whether a baby is hungry, fussy, or in pain, built from a database of 2,000 infant cry recordings. Anderson explained that "when I became a parent, I had just finished my PhD and I thought I was very, very smart and then I had a baby and I felt like an idiot because I couldn't understand what my baby needed."

ChatterBaby app could help parents figure out why their baby is crying

UCLA statistician Ariana Anderson developed ChatterBaby to help parents interpret their baby's cries using artificial intelligence that analyzes over 6,000 acoustic features from five-second audio samples. Anderson built the app's database using 2,000 audio samples, including cries recorded during ear piercings and vaccinations to distinguish pain cries, with a panel of mothers unanimously agreeing on hungry versus fussy classifications. The free app, released last month, aims to help parents quickly identify whether their baby is hungry, fussy, or in pain.

UCLA smartphone app translates meanings of your baby's cries

UCLA researchers developed ChatterBaby to help deaf parents identify when their baby is crying and decode what they are trying to communicate, using machine learning to analyze cry frequencies and patterns. After testing on over 2,000 cry samples, the system correctly identifies pain cries over 90 percent of the time, though it struggles more with differentiating fussy and hungry cries. The app includes a personalization feature that allows parents to train the algorithm by recording and tagging their own baby's specific cry patterns.

UCLA smartphone app translates meanings of your baby's cries

UCLA researchers developed ChatterBaby to help decode baby cries and assist deaf parents in identifying when their baby is crying, using an algorithm that categorizes cries into three types: pain, hunger, and fussiness. "As a statistician, I thought, 'Can we train an algorithm to do what my ears as a parent can do automatically?' The answer was yes," explains lead researcher Ariana Anderson. The system correctly identifies pain cries over 90 percent of the time and includes a personalization feature that allows users to train the algorithm to better understand their specific baby's sounds.

App helps new and deaf parents know when and why their baby is crying

UCLA researchers led by Ariana Anderson developed ChatterBaby to help parents identify when and why their babies are crying, using artificial intelligence trained on over 2,000 infant cry samples. The algorithms correctly categorize cries into pain, hunger, and fussiness more than 90 percent of the time, with deaf parent Delbert Whetter noting, "For the first time, we can confirm that our baby is crying, and then learn with a great deal of certainty what he's crying about." The app is now available for free on iPhone and Android devices.

New app uses machine learning to interpret babies' cries

UCLA's Ariana Anderson developed ChatterBaby to help parents understand why their babies are crying, using artificial intelligence to analyze uploaded cry recordings. The app may help parents with hearing loss, new parents learning to interpret cries, and women with postpartum depression who research shows have more difficulty discerning cry meanings. Scientists launched a crying-pattern study through the app to investigate whether certain patterns can be associated with developmental disorders like autism.

App Information

Category

Evidence Profile

Initial evidence from research studies

Platforms

Updated

Feb 2021

© 2025 University of California, Los Angeles