NASA NeMO-Net

Published in academic literature

App Summary

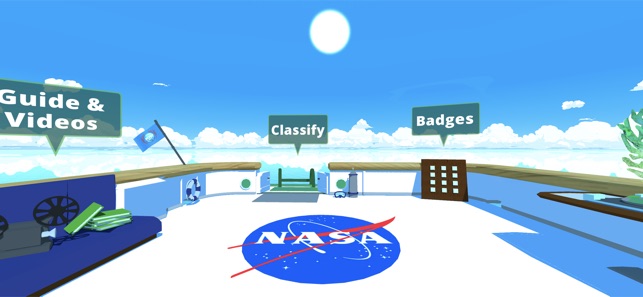

App Screenshots

Detailed Description

Functionality & Mechanism

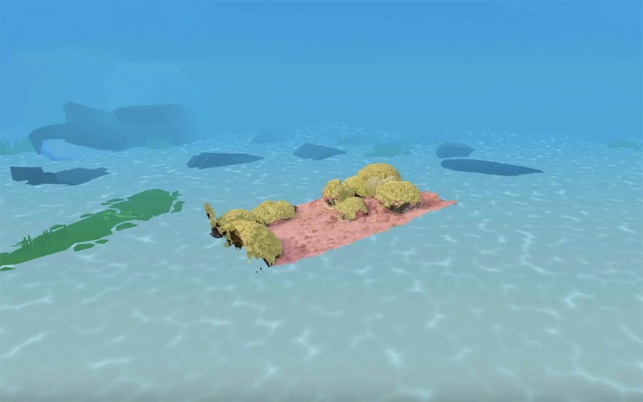

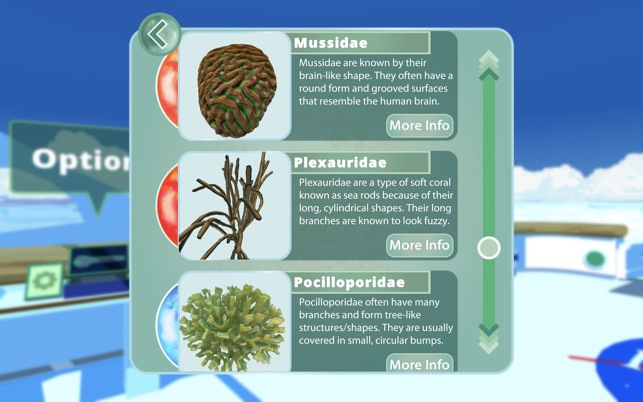

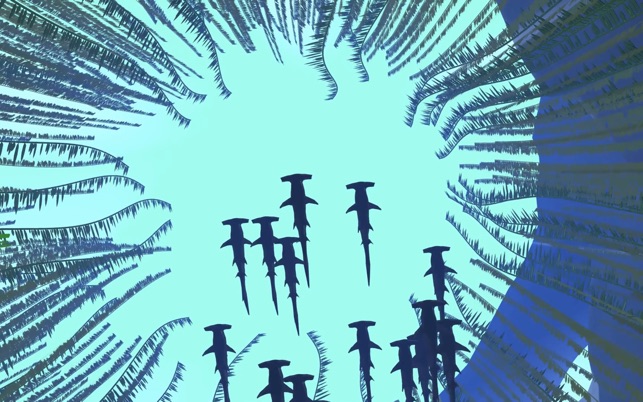

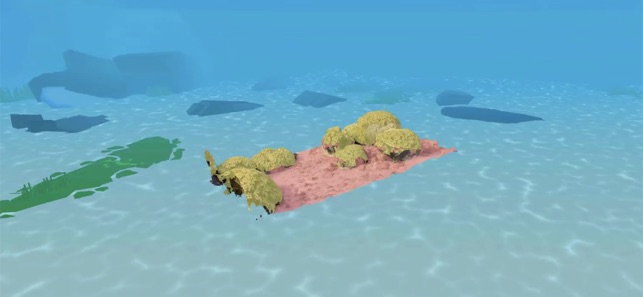

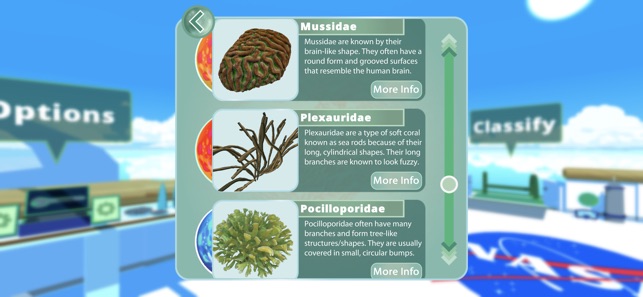

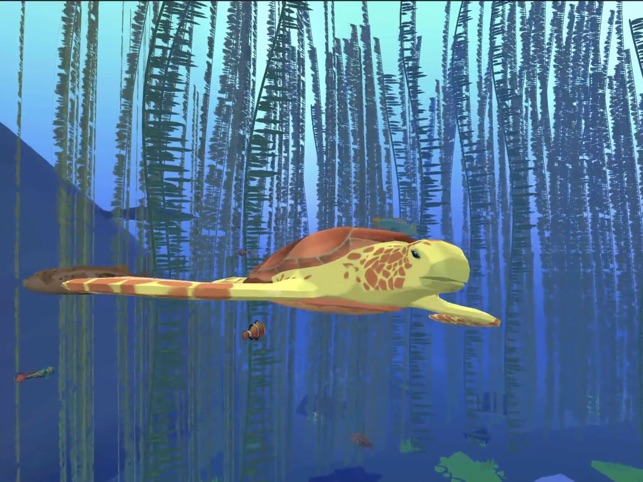

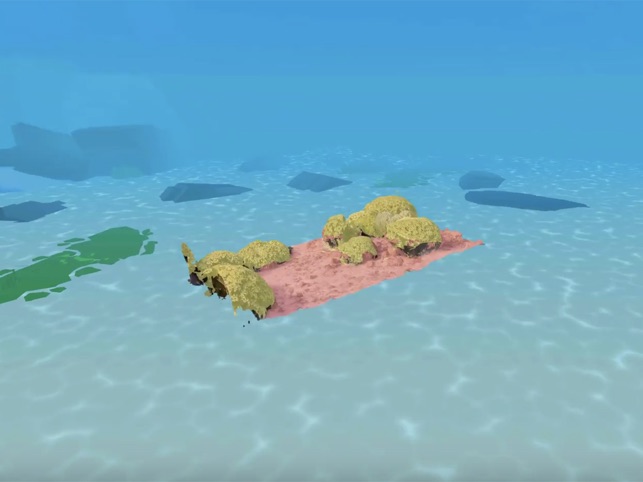

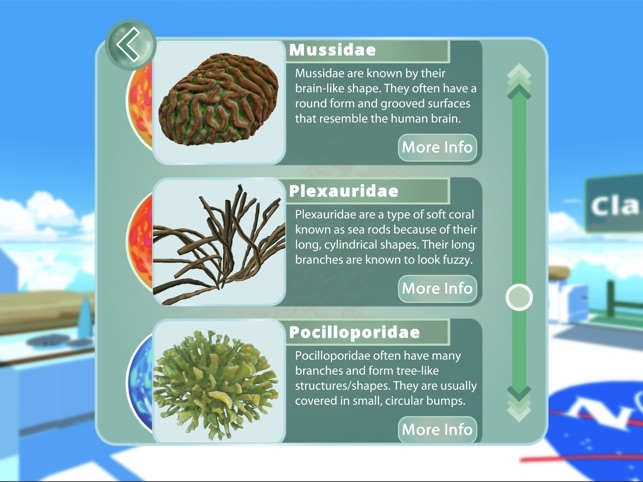

Developed by NASA, NeMO-Net is a citizen science application designed to generate training data for a machine learning model. The interface presents users with high-resolution 3D models of coral reefs captured by advanced remote sensing technologies. Sessions involve semantically segmenting these models by "painting" classifications onto different benthic habitats. An integrated active learning framework allows users to rate and edit the classifications of others, contributing to a high-quality dataset used for global coral reef mapping.

Evidence & Research Context

- The system's underlying convolutional neural network demonstrated approximately 80-85% classification accuracy across nine benthic classes using spectrally variable satellite imagery from a Fijian island chain.

- A preliminary validation study reported a four-class coral classification accuracy of 94.4% during early development stages.

- The citizen science platform generated over 70,000 user classifications within its initial seven months, demonstrating its capacity for large-scale data acquisition.

- An active learning framework is integrated to evaluate and filter user-generated data, a documented method for enhancing the quality of the final training dataset.

Intended Use & Scope

This application is intended for the general public to contribute to a citizen science research project. Its primary utility is to generate a large-scale, human-validated dataset for training and refining an automated global coral reef mapping algorithm. The tool does not provide direct ecological assessments but facilitates the creation of foundational data for large-scale environmental research and future conservation planning.

Studies & Publications

Peer-reviewed research associated with this app.

NeMO-Net Gamifying 3D Labeling of Multi-Modal Reference Datasets to Support Automated Marine Habitat Mapping

Van et al. (2021) · Frontiers in Marine Science

Describes the research-driven development of this appNASA NeMO-Net's Convolutional Neural Network: Mapping Marine Habitats with Spectrally Heterogeneous Remote Sensing Imagery

Li et al. (2020) · IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing

Describes the research-driven development of this appIn the Media

NeMO-Net

NASA developed NeMO-Net as a single-player iPad game where players help classify coral reefs by painting 3D and 2D coral images, using game data to train the first neural multi-modal observation and training network for global coral reef assessment. The app leverages NASA's Supercomputer Pleiades and exploits active learning with mm-scale remotely sensed 3D images captured using fluid lensing technology to remove ocean wave distortion. Current global coral reef assessments suffer from segmentation errors greater than 40%, which NeMO-Net aims to improve through unprecedented spatial and temporal scale analysis.

Map the World's Coral Reefs for NASA with NeMO-Net

NASA scientists created NeMO-Net to help map the world's coral reefs by having players trace corals in satellite photos, training algorithms to automatically identify reef features. The game greets new players with oceanographer Sylvia Earle explaining "Your mission is to take command of a research vessel, and travel the world collecting data on the ocean." Players use in-game paintbrushes to color-code coral and ocean-floor features in three dimensions while learning to identify different types of corals.

NASA NeMO-Net video game helps researchers understand global coral reef health

NASA's Ames Research Center developed NeMO-Net to address coral reef conservation challenges, using a video game approach that trains artificial intelligence tools while engaging citizen scientists. Lead author Jarrett van den Bergh explains that "vast amounts of 3D coral reef imagery need to be classified so that we can get an idea of how coral reef ecosystems are faring over time." The game utilizes convolutional neural networks to automatically analyze complex underwater imagery collected from divers, snorkelers, and satellites.

NASA asks gamers to map coral reefs with NeMO-Net

NASA Ames Research Center developed NeMO-Net to help analyze coral reef data through citizen science gaming, using 3D "fluid lensing" camera images captured from locations including Puerto Rico, Guam and American Samoa. "NeMO-Net leverages the most powerful force on this planet: not a fancy camera or a supercomputer, but people," said principal investigator Ved Chirayath, who developed the neural network that uses player input to build a global coral map. The game trains NASA's Pleiades supercomputer to recognize corals through machine learning, with classification accuracy improving as more players participate.

NASA Calls on Gamers, Citizen Scientists to Help Map World's Corals

NASA's Ames Research Center developed NeMO-Net to help map the world's coral reefs by having gamers and citizen scientists classify corals using 3D ocean floor images captured by fluid-lensing cameras. "NeMO-Net leverages the most powerful force on this planet: not a fancy camera or a supercomputer, but people," said principal investigator Ved Chirayath. Players interact with real NASA data while virtually traveling on the research vessel Nautilus, with their input training NASA's Pleiades supercomputer to recognize corals from ocean imagery.

App Information

© 2025 NASA

Tags

Developer Links

Privacy PolicyNASA NeMO-Net

Free